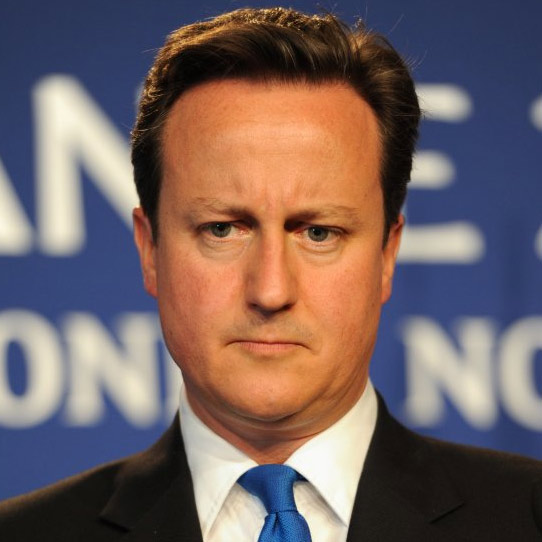

Cameron demands action on child abuse images

Every right minded person wants to get rid of child abuse images, and stop people from accessing them. But Cameron’s targeting of search engines seems wrong headed and rather 1995.

Every right minded person wants to get rid of child abuse images, and stop people from accessing them. But Cameron’s targeting of search engines seems wrong headed and rather 1995.

Firstly, most child abuse images are circulated in private networks, or are sold by criminal gangs. Banning search terms seems unlikely to combat the serious activity, which is independent of search engines. It may help for casual searches, but this seems at best a marginal help, and certainly not worth a Prime Ministerial announcement, nor threats of legislation implying that this action is critical for child safety.

Let us accept that some identifiable search terms will bring up illegal images. If a list of blacklisted terms exists, then new terms will be invented so that people can find what they want. Thus Cameron invites a game of cat and mouse which is likely to have very limited impact. The terms used may hide themselves into search terms that cannot be banned because they are innocuous. This, for instance, is the kind of reason why the term ‘Lolita’ was adopted to signal such material.

The first thing that should be done is for Cameron to ask for statistics about the searches he wants to ban. It would be interesting to know if this has been requested but no figures have been quoted so far.

In any case, search engines have moved well beyond being dependent on identifying content through a small set of accurately reproduced keywords. Banning a few terms seems unlikely to prevent any kind of material from being available through search.

Of course, Google and other search engines, once they are aware of such material, will always remove it from their databases. Organisations like IWF who look for it may well, of course, be using search engine terms to find it as well, probably in more sophisticated ways than the casual searchers Cameron believes he is trying to target: he should be careful that his policy does not make the lives of the IWF more difficult.

It is also being reported that Cameron is going to say tomorrow to Google, Microsoft and Yahoo! that:

You’re the people who take pride in doing what they say can’t be done.

You hold hackathons for people to solve impossible internet conundrums.

Well – hold a hackathon for child safety.

Set your greatest brains to work on this.

On the face of it, this would be an open invitation to the public — in that any member of the public can create a search engine, or any other kind of network software — to engage in an activity that would almost certainly result in a violation of the Sexual Offences Act 2003*. One may well expect someone to use this in a future defence: “I only downloaded those images to help train an algorithm to block them”.

The real ways this offensive and rightly illegal material is combated is through takedown and targeting criminals. Takedowns of child abuse image websites still take days, rather than hours in the case of bank phishing attempts. More could be done to make sure international co-operation improves the speed that images are taken down, therefore.

CEOP have done work to restrict the use of payment mechanisms, with some success, but more could be done to stop the money laundering that is necessary for images to be traded.

Search engines are an easy target. They cannot be seen to aid criminality as serious as paedophilia. They will therefore be under immense pressure to do what Cameron asks, no matter if it is counter productive or simply irrelevant to his aims. Cameron will be able to chalk up a victory and move onto the next Daily Mail headline generating press stunt.

Should we, therefore, care? We should: it is embarrassing for our Prime Minister to stand up and demand a policy that is likely to be of highly marginal impact, and discuss it as if it was of vital national interest, while failing to concentrate on the real answers.

Cameron’s announcement is symptomatic of the way the Internet is viewed and treated by policy makers. The technical challenges and consequences of policies are viewed as less important than the moral purpose justifying calls for action. Policies are announced before they have been properly considered. And worse, these announcements risk being another case of blaming the commercial intermediaries – in this case search engines – because that is easier and cheaper than doing what is really necessary.

* Actually, Section 1 of the Protection of Children Act 1978 and section 160 of the Criminal Justice Act 1988.